Please seek expert statistical guidance if you want to pursue such analysis.Ĭopyright © 2000-2021 StatsDirect Limited, all rights reserved.

Generalisability might be explored through additional analyses that incorporate specific predictive uncertainties on top of the intrinsic uncertainties of the studies under review ( Ades and Higgins, 2005). Random effects is not a cure for difficulty in generalising the results of a meta-analysis to real-world situations. This should not be a problem with most meta-analyses, however do not use random effects models with sparse datasets without expert statistical guidance. More data are required for random effects models to achieve the same statistical power as fixed effects models, and there is no 'exact' way to handle studies with small numbers when assuming random effects. Many investigators consider the random effects approach to be a more natural choice than fixed effects, for example in medical decision making contexts ( Fleiss and Gross, 1991 DerSimonian and Laird 1985 Ades and Higgins, 2005). An alternative approach, 'random effects', allows the study outcomes to vary in a normal distribution between studies. With fixed effects all of the studies that you are trying to examine as a whole are considered to have been conducted under similar conditions with similar subjects – in other words, the only difference between studies is their power to detect the outcome of interest. If there is very little variation between trials then I² will be low and a fixed effects model might be appropriate. The L'Abbé plot can be used to explore the inconsistency of studies visually.Ĭhoosing between fixed and random effects models The non-central chi-square method is currently the method of choice (Higgins, personal communication, 2006) – it is computed if the 'exact' option is selected. A confidence interval for I² is constructed using either i) the iterative non-central chi-squared distribution method of Hedges and Piggott (2001) or ii) the test-based method of Higgins and Thompson (2002). Unlike Q it does not inherently depend upon the number of studies considered.

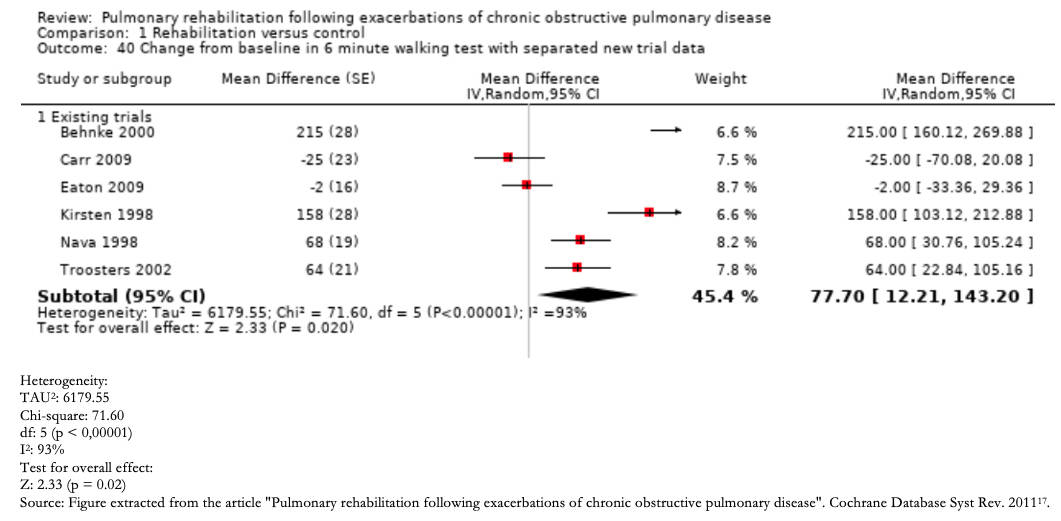

I² is an intuitive and simple expression of the inconsistency of studies’ results. The I² statistic describes the percentage of variation across studies that is due to heterogeneity rather than chance ( Higgins and Thompson, 2002 Higgins et al., 2003). It is arguably not possible to examine the null hypothesis that all studies are evaluating the same effect, by considering the only the summary data from the studies: The heterogeneity test results should be considered alongside a qualitative assessment of the combinability of studies in a systematic review. An additional test, due to Breslow and Day (1980), is provided with the odds ratio meta-analysis. 2003): Q is included in each StatsDirect meta-analysis function because it forms part of the DerSimonian-Laird random effects pooling method DerSimonian and Laird 1985). Conversely, Q has too much power as a test of heterogeneity if the number of studies is large ( Higgins et al. Q has low power as a comprehensive test of heterogeneity ( Gavaghan et al, 2000), especially when the number of studies is small, i.e. Q is distributed as a chi-square statistic with k (numer of studies) minus 1 degrees of freedom. The classical measure of heterogeneity is Cochran’s Q, which is calculated as the weighted sum of squared differences between individual study effects and the pooled effect across studies, with the weights being those used in the pooling method. Measuring the inconsistency of studies’ results StatsDirect calls statistics for measuring heterogentiy in meta-analysis 'non-combinability' statistics in order to help the user to interpret the results. Heterogeneity in meta-analysis refers to the variation in study outcomes between studies.